- Overview

- Tutorials

- How Tos

- Download

- Install

- Configure

- Secure

- TLS API Configuration

- Configure API Authentication and Authorization with JWT

- Configure API Limits

- Set Resource Limits

- Crypto key management

- Restrict key usage

- Namespace Key Management

- Key management service (KMS) configuration

- Optimize

- Observe

- Operate

- Initializing node identity manually

- Canton Console

- Synchronizer connections

- High Availability Usage

- Manage Daml packages and archives

- Participant Node pruning

- Party Management

- Party replication

- Decentralized party overview

- Setup an External Party

- Ledger API User Management

- Node Traffic Management

- Identity Management

- Upgrade

- Decommission

- Recover

- Troubleshoot

- Explanations

- Reference

Note

This page is a work in progress. It may contain incomplete or incorrect information.

Monitoring Best Practices¶

Introduction¶

Observability (also known as “monitoring”) lets you determine if the Daml Enterprise solution is healthy or not. If the state is not healthy, observability helps diagnose the root cause. There are three parts to observability: metrics, logs, and traces. These are described in this section.

To avoid becoming overwhelmed by the number of metrics and log messages, follow these steps:

Read the shortcut to learning what is important, which is described below in the section Hands-On with the Daml Enterprise - Observability Example as a starting point and inspiration when building your metric monitoring.

For an overview of how most metrics are exposed, read the section Golden Signals and Key Metrics Quick Start below. It describes the philosophy behind metric naming and labeling.

The remaining sections provide references to more detailed information.

Hands-On with the Daml Enterprise - Observability Example¶

The Daml Enterprise - Observability Example GitHub repository provides a complete reference example for exploring the metrics that Daml Enterprise exposes. You can use it to explore the collection, aggregation, filtering, and visualization of metrics. It is self-contained, with the following components:

An example Docker compose file to create a run-time for all the components

Some shell scripts to generate requests to the Daml Enterprise solution

A Prometheus config file to scrape the metrics data

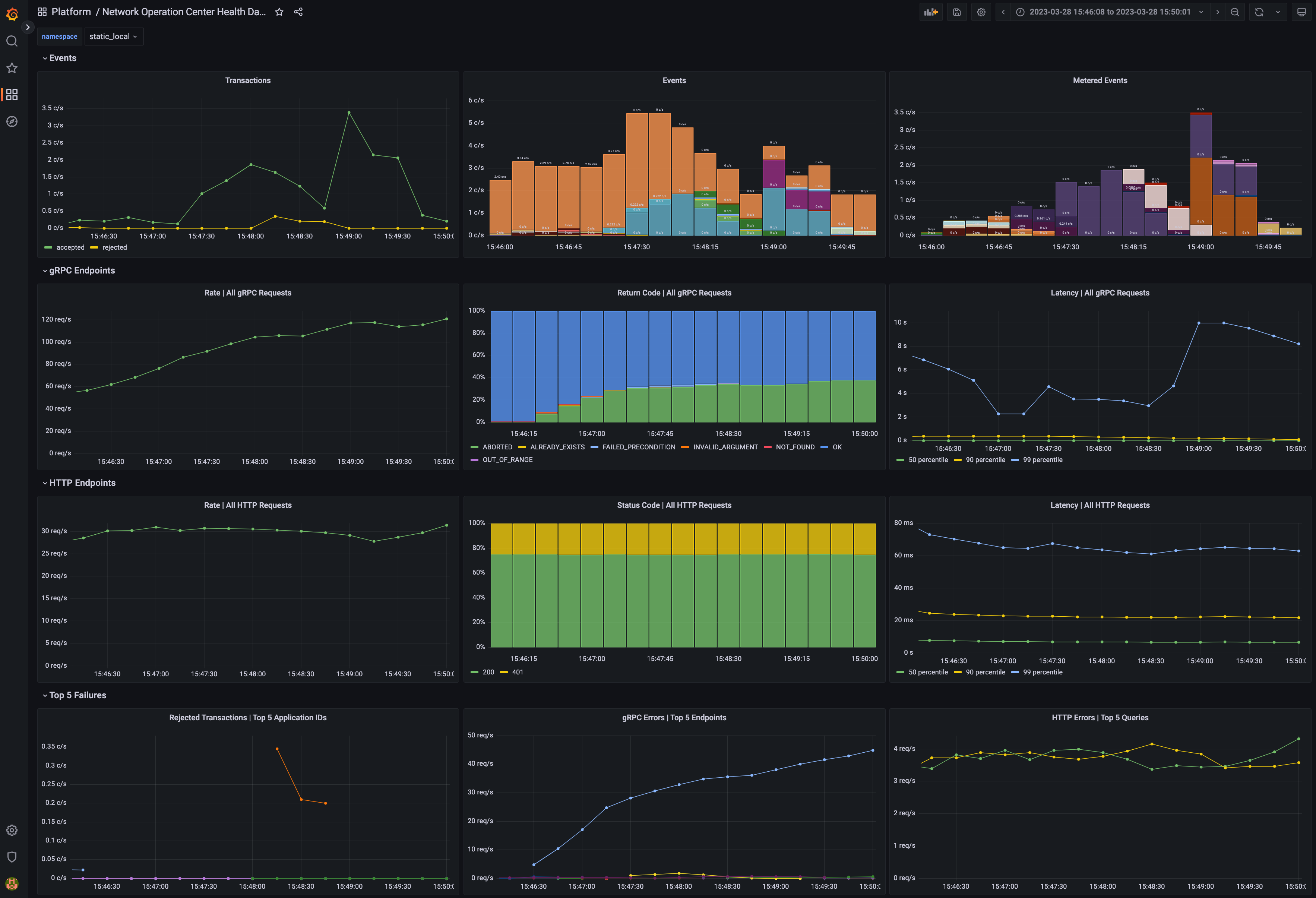

A Grafana template file(s) to visualize the metrics in a meaningful way, such as shown below in the example dashboard

Dashboard with metrics¶

Golden Signals and Key Metrics Quick Start¶

The best practice for monitoring a microservices application is an approach known as the Golden Signals, or the RED method. In this approach, metric monitoring determines whether the application is healthy and, if not healthy, which service is the root cause of the issue. The Golden Signals for HTTP and gRPC endpoints are supported for all endpoints. Key metrics specific to Daml Enterprises are also available. These are described below.

The following Golden Signal metrics for each HTTP and gRPC API are available:

Input request rate, as a counter

Error rate, as a counter (discussed below)

Latency (the time to process a request), as a histogram

Size of the payload, as a counter, following the Apache HTTP precedent

You can filter or aggregate each metric using its accompanying labels. The instrumentation labels added to each HTTP API metric are as follows:

http_verb: the HTTP verb (for example: GET, POST)http_status: the status code (for example: 200, 401, 403, 504)host: the host identifierdaml_version: the Daml release numberservice: a string to identify what Daml service or Canton component is running in this process (for example:participant,sequencer,json_api)path: the request made to the endpoint (for example:/v2/commands/submit-and-wait,/v2/state/active-contracts)

The gRPC protocol is layered on top of HTTP/2, so certain labels (such as the daml_version and service) from the above section are included. The labels added by default to each gRPC API metric are as follows:

canton_version: the Canton protocol versiongrpc_code: the human-readable status code for gRPC (for example:OK,CANCELLED,DEADLINE_EXCEEDED)The type of the client/server gRPC request, under the labels

grpc_client_typeandgrpc_server_typeThe protobuf package and service names, under the labels

grpc_service_nameandgrpc_method_name

The following other key metrics are monitored:

A binary gauge indicates whether the node is healthy or not healthy. This can also be used to infer which node is passive in a highly available configuration because it will show as not being healthy, while the active node is always healthy.

A binary gauge signals whether a node is active or passive, for identifying which node is the active node.

A binary gauge detects when pruning is occurring.

Each participant node measures the count of the inflight (dirty) requests so the user can see if

maxDirtyRequestslimit is close to being hit. The metrics are:canton_dirty_requestsandcanton_max_dirty_requests.Each participant node records the distribution of events (updates) received by the participant and allows drill-down by event type (package upload, party creation, or transaction), status (success or failure), participant ID, and application ID (if available). The counter is called

daml_indexer_events_total.The ledger event requests are totaled in a counter called

daml_indexer_metered_events_total.JVM garbage collection metrics are collected.

This list is not exhaustive. It highlights the most important metrics.

Set Up Metrics Scraping¶

Enable the Prometheus Reporter¶

Prometheus is recommended for metrics reporting. Other reporters (jmx, graphite, and csv) are supported, but they are deprecated. Any such reporter should be migrated to Prometheus.

Prometheus can be enabled using:

canton.monitoring.metrics.reporters = [{

type = prometheus

address = "localhost" // default

port = 9000 // default

}]

Prometheus-Only Metrics¶

Some metrics are available only when using the Prometheus reporter. These metrics include common gRPC and HTTP metrics (which help you to measure the four golden signals), and JVM GC and memory usage metrics (if enabled). The metrics are documented in detail below.

Any metric marked with * is available only when using the Prometheus reporter.

Deprecated Reporters¶

JMX-based reporting (for testing purposes only) can be enabled using:

canton.monitoring.metrics.reporters = [{ type = jmx }]

Additionally, metrics can be written to a file:

canton.monitoring.metrics.reporters = [{

type = jmx

}, {

type = csv

directory = "metrics"

interval = 5s // default

filters = [{

contains = "canton"

}]

}]

or reported via Graphite (to Grafana) using:

canton.monitoring.metrics.reporters = [{

type = graphite

address = "localhost" // default

port = 2003

prefix.type = hostname // default

interval = 30s // default

filters = [{

contains = "canton"

}]

}]

When using the graphite or the csv reporter, Canton periodically evaluates all metrics matching the given filters. Filter for only those metrics that are relevant to you.

In addition to Canton metrics, the process can also report Daml metrics (of the Ledger API server). Optionally, JVM metrics can be included using:

canton.monitoring.metrics.report-jvm-metrics = yes // default no

Metrics¶

The following sections contain the common metrics exposed for Daml services supporting a Prometheus metrics reporter.

For the metric types referenced below, see the relevant Prometheus documentation.

Participant Metrics¶

daml.cache.evicted_weight¶

Summary: The sum of weights of cache entries evicted.

Description: The total weight of the entries evicted from the cache.

Type: counter

Qualification: Debug

daml.cache.evictions¶

Summary: The number of the evicted cache entries.

Description: When an entry is evicted from the cache, the counter is incremented.

Type: counter

Qualification: Debug

daml.cache.hits¶

Summary: The number of cache hits.

Description: When a cache lookup encounters an existing cache entry, the counter is incremented.

Type: counter

Qualification: Debug

daml.cache.misses¶

Summary: The number of cache misses.

Description: When a cache lookup first encounters a missing cache entry, the counter is incremented.

Type: counter

Qualification: Debug

daml.db-storage.general.executor.exectime¶

Summary: Execution time metric for database tasks

Description: The time a task is running on the database is measured using this metric.

Type: timer

Qualification: Debug

daml.db-storage.general.executor.load¶

Summary: Load of database pool

Description: Database queries run as tasks on an async executor. This metric shows the current number of queries running in parallel divided by the number database connections for this database connection pool.

Type: gauge

Qualification: Saturation

daml.db-storage.general.executor.queued¶

Summary: Number of database access tasks waiting in queue

Description: Database access tasks get scheduled in this queue and get executed using one of the existing asynchronous sessions. A large queue indicates that the database connection is not able to deal with the large number of requests. Note that the queue has a maximum size. Tasks that do not fit into the queue will be retried, but won’t show up in this metric.

Type: counter

Qualification: Saturation

daml.db-storage.general.executor.running¶

Summary: Number of database access tasks currently running

Description: Database access tasks run on an async executor. This metric shows the current number of tasks running in parallel.

Type: gauge

Qualification: Debug

daml.db-storage.general.executor.waittime¶

Summary: Scheduling time metric for database tasks

Description: Every database query is scheduled using an asynchronous executor with a queue. The time a task is waiting in this queue is monitored using this metric.

Type: timer

Qualification: Debug

daml.db-storage.write.executor.exectime¶

Summary: Execution time metric for database tasks

Description: The time a task is running on the database is measured using this metric.

Type: timer

Qualification: Debug

daml.db-storage.write.executor.load¶

Summary: Load of database pool

Description: Database queries run as tasks on an async executor. This metric shows the current number of queries running in parallel divided by the number database connections for this database connection pool.

Type: gauge

Qualification: Saturation

daml.db-storage.write.executor.queued¶

Summary: Number of database access tasks waiting in queue

Description: Database access tasks get scheduled in this queue and get executed using one of the existing asynchronous sessions. A large queue indicates that the database connection is not able to deal with the large number of requests. Note that the queue has a maximum size. Tasks that do not fit into the queue will be retried, but won’t show up in this metric.

Type: counter

Qualification: Saturation

daml.db-storage.write.executor.running¶

Summary: Number of database access tasks currently running

Description: Database access tasks run on an async executor. This metric shows the current number of tasks running in parallel.

Type: gauge

Qualification: Debug

daml.db-storage.write.executor.waittime¶

Summary: Scheduling time metric for database tasks

Description: Every database query is scheduled using an asynchronous executor with a queue. The time a task is waiting in this queue is monitored using this metric.

Type: timer

Qualification: Debug

daml.db.commit*¶

Summary: The time needed to perform the SQL query commit.

Description: This metric measures the time it takes to commit an SQL transaction relating to the <operation>. It roughly corresponds to calling commit() on a DB connection.

Type: timer

Qualification: Debug

- Labels:

name: The operation/pool for which the metric is registered.

daml.db.compression*¶

Summary: The time needed to decompress the SQL query result.

Description: Some index database queries that target contracts involve a decompression step. For such queries this metric represents the time it takes to decompress contract arguments retrieved from the database.

Type: timer

Qualification: Debug

- Labels:

name: The operation/pool for which the metric is registered.

daml.db.exec*¶

Summary: The time needed to run the SQL query and read the result.

Description: This metric encompasses the time measured by query and commit metrics. Additionally it includes the time needed to obtain the DB connection, optionally roll it back and close the connection at the end.

Type: timer

Qualification: Debug

- Labels:

name: The operation/pool for which the metric is registered.

daml.db.query*¶

Summary: The time needed to run the SQL query.

Description: This metric measures the time it takes to execute a block of code (on a dedicated executor) related to the <operation> that can issue multiple SQL statements such that all run in a single DB transaction (either committed or aborted).

Type: timer

Qualification: Debug

- Labels:

name: The operation/pool for which the metric is registered.

daml.db.translation*¶

Summary: The time needed to turn serialized Daml-LF values into in-memory objects.

Description: Some index database queries that target contracts and transactions involve a Daml-LF translation step. For such queries this metric stands for the time it takes to turn the serialized Daml-LF values into in-memory representation.

Type: timer

Qualification: Debug

- Labels:

name: The operation/pool for which the metric is registered.

daml.db.wait*¶

Summary: The time needed to acquire a connection to the database.

Description: SQL statements are run in a dedicated executor. This metric measures the time it takes between creating the SQL statement corresponding to the <operation> and the point when it starts running on the dedicated executor.

Type: timer

Qualification: Debug

- Labels:

name: The operation/pool for which the metric is registered.

daml.grpc.server¶

Summary: Distribution of the durations of serving gRPC requests.

Description:

Type: timer

Qualification: Latency

daml.grpc.server.handled¶

Summary: Total number of handled gRPC requests.

Description:

Type: meter

Qualification: Traffic

daml.grpc.server.messages.received¶

Summary: Total number of gRPC messages received (on either type of connection).

Description:

Type: meter

Qualification: Traffic

daml.grpc.server.messages.received.bytes¶

Summary: Distribution of payload sizes in gRPC messages received (both unary and streaming).

Description:

Type: histogram

Qualification: Traffic

daml.grpc.server.messages.sent¶

Summary: Total number of gRPC messages sent (on either type of connection).

Description:

Type: meter

Qualification: Traffic

daml.grpc.server.messages.sent.bytes¶

Summary: Distribution of payload sizes in gRPC messages sent (both unary and streaming).

Description:

Type: histogram

Qualification: Traffic

daml.grpc.server.requests.rejections*¶

Summary: Number of rejected requests due to active request limits.

Description: Counts the number of requests rejected because the active request limit was reached.

Type: counter

Qualification: Saturation

- Labels:

method: The method / service name limited.

service: The API the method belongs to

api: The API the method belongs to

daml.grpc.server.started¶

Summary: Total number of started gRPC requests (on either type of connection).

Description:

Type: meter

Qualification: Traffic

daml.http.requests¶

Summary: Total number of HTTP requests received.

Description:

Type: meter

Qualification: Debug

daml.http.requests¶

Summary: The duration of the HTTP requests.

Description:

Type: timer

Qualification: Debug

daml.http.requests.payload.bytes¶

Summary: Distribution of the sizes of payloads received in HTTP requests.

Description:

Type: histogram

Qualification: Debug

daml.http.responses.payload.bytes¶

Summary: Distribution of the sizes of payloads sent in HTTP responses.

Description:

Type: histogram

Qualification: Debug

daml.http.websocket.messages.received¶

Summary: Total number of received WebSocket messages.

Description:

Type: meter

Qualification: Debug

daml.http.websocket.messages.received.bytes¶

Summary: Distribution of the size of received WebSocket messages.

Description:

Type: histogram

Qualification: Debug

daml.http.websocket.messages.sent¶

Summary: Total number of sent WebSocket messages.

Description:

Type: meter

Qualification: Debug

daml.http.websocket.messages.sent.bytes¶

Summary: Distribution of the size of sent WebSocket messages.

Description:

Type: histogram

Qualification: Debug

daml.participant.api.commands.delayed_submissions¶

Summary: The number of the delayed Daml commands.

Description: The number of Daml commands that have been delayed internally because they have been evaluated to require the ledger time further in the future than the expected latency.

Type: meter

Qualification: Debug

daml.participant.api.commands.failed_command_interpretations¶

Summary: The number of Daml commands that failed in interpretation.

Description: The number of Daml commands that have been rejected by the interpreter (e.g. badly authorized action).

Type: meter

Qualification: Errors

daml.participant.api.commands.interactive_prepares¶

Summary: The time to prepare a transaction for interactive submission.

Description: The time to validate and interpret a command before it is returned to the caller for external signing.

Type: timer

Qualification: Latency

daml.participant.api.commands.max_in_flight_capacity¶

Summary: The maximum number of Daml commands that can await completion.

Description: The maximum number of Daml commands that can await completion in the Command Service.

Type: counter

Qualification: Debug

daml.participant.api.commands.max_in_flight_length¶

Summary: The number of the Daml commands awaiting completion.

Description: The number of the currently Daml commands awaiting completion in the Command Service.

Type: counter

Qualification: Debug

daml.participant.api.commands.prepares_running¶

Summary: The number of the Daml commands for which transactions are currently being prepared by the ledger api server.

Description: The number of the Daml commands that are currently being prepared by the ledger api server (including validation, interpretation).

Type: counter

Qualification: Saturation

daml.participant.api.commands.reassignment_validation¶

Summary: The time to validate a reassignment command.

Description: The time to validate a submitted Daml command before is fed to the interpreter.

Type: timer

Qualification: Debug

daml.participant.api.commands.submissions¶

Summary: The time to fully process a Daml command.

Description: The time to validate and interpret a command before it is handed over to the synchronization services to be finalized (either committed or rejected).

Type: timer

Qualification: Latency

daml.participant.api.commands.submissions_running¶

Summary: The number of the Daml commands that are currently being handled by the ledger api server.

Description: The number of the Daml commands that are currently being handled by the ledger api server (including validation, interpretation, and handing the transaction over to the synchronization services).

Type: counter

Qualification: Saturation

daml.participant.api.commands.taps_package_selection¶

Summary: The time spent on package selection in a single TAPS pass.

Description: The time spent on package selection in a single pass of the Topology-Aware Package Selection, before the command is handed to the Daml Engine for interpretation.

Type: timer

Qualification: Latency

daml.participant.api.commands.taps_passes¶

Summary: The number of TAPS passes during processing of a command.

Description: The number of Topology-Aware Package Selection passes during processing of a command.

Type: histogram

Qualification: Debug

daml.participant.api.commands.valid_submissions¶

Summary: The total number of the valid Daml commands.

Description: The total number of the Daml commands that have passed validation and were sent to interpretation in this ledger api server process.

Type: meter

Qualification: Debug

daml.participant.api.commands.validation¶

Summary: The time to validate a Daml command.

Description: The time to validate a submitted Daml command before is fed to the interpreter.

Type: timer

Qualification: Debug

daml.participant.api.contract_store.lookup_batched¶

Summary: The time to execute batched contract lookup.

Description: The time to enqueue and execute batched contract lookup.

Type: timer

Qualification: Debug

daml.participant.api.contract_store.lookup_batched_contract_ids¶

Summary: The time to execute batched contract id lookup.

Description: The time to enqueue and execute batched contract id lookup.

Type: timer

Qualification: Debug

daml.participant.api.contract_store.lookup_batched_internal_ids¶

Summary: The time to execute batched internal id lookup.

Description: The time to enqueue and execute batched internal id lookup.

Type: timer

Qualification: Debug

daml.participant.api.contract_store.lookup_persisted¶

Summary: The time to lookup persisted contract by LF contract id.

Description: The time to enqueue and execute the lookup for persisted contract by LF contract id.

Type: timer

Qualification: Debug

daml.participant.api.execution.cache.contract_state.register_update¶

Summary: The time spent to update the contract state cache.

Description: The total time spent in sequential update steps of the contract state caches updating logic. This metric is created with debugging purposes in mind.

Type: timer

Qualification: Debug

daml.participant.api.execution.cache.key_state.register_update¶

Summary: The time spent to update the key state cache.

Description: The total time spent in sequential update steps of the key state caches updating logic. This metric is created with debugging purposes in mind.

Type: timer

Qualification: Debug

daml.participant.api.execution.engine¶

Summary: The time spent executing a Daml command.

Description: The time spent by the Daml engine executing a Daml command (excluding fetching data).

Type: timer

Qualification: Debug

daml.participant.api.execution.engine_running¶

Summary: The number of Daml commands currently being executed.

Description: The number of the commands that are currently being executed by the Daml engine (excluding fetching data).

Type: counter

Qualification: Debug

daml.participant.api.execution.get_lf_package¶

Summary: The time to fetch individual Daml code packages during interpretation.

Description: The interpretation of a command in the ledger api server might require fetching multiple Daml packages. This metric exposes the time needed to fetch the packages that are necessary for interpretation.

Type: timer

Qualification: Debug

daml.participant.api.execution.lookup_active_contract¶

Summary: The time to lookup individual active contracts during interpretation.

Description: The interpretation of a command in the ledger api server might require fetching multiple active contracts. This metric exposes the time to lookup individual active contracts.

Type: timer

Qualification: Debug

daml.participant.api.execution.lookup_active_contract_count_per_execution¶

Summary: The number of the active contracts looked up per Daml command.

Description: The interpretation of a command in the ledger api server might require fetching multiple active contracts. This metric exposes the number of active contracts that must be looked up to process a Daml command.

Type: histogram

Qualification: Debug

daml.participant.api.execution.lookup_active_contract_per_execution¶

Summary: The compound time to lookup all active contracts in a single Daml command.

Description: The interpretation of a command in the ledger api server might require fetching multiple active contracts. This metric exposes the compound time to lookup all the active contracts in a single Daml command.

Type: timer

Qualification: Debug

daml.participant.api.execution.lookup_contract_key¶

Summary: The time to lookup individual contract keys during interpretation.

Description: The interpretation of a command in the ledger api server might require fetching multiple contract keys. This metric exposes the time needed to lookup individual contract keys.

Type: timer

Qualification: Debug

daml.participant.api.execution.lookup_contract_key_count_per_execution¶

Summary: The number of contract keys looked up per Daml command.

Description: The interpretation of a command in the ledger api server might require fetching multiple contract keys. This metric exposes the number of contract keys that must be looked up to process a Daml command.

Type: histogram

Qualification: Debug

daml.participant.api.execution.lookup_contract_key_per_execution¶

Summary: The compound time to lookup all contract keys in a single Daml command.

Description: The interpretation of a command in the ledger api server might require fetching multiple contract keys. This metric exposes the compound time needed to lookup all the contract keys in a single Daml command.

Type: timer

Qualification: Debug

daml.participant.api.execution.retry¶

Summary: The number of the interpretation retries.

Description: The total number of interpretation retries attempted due to mismatching ledger effective time in this ledger api server process.

Type: meter

Qualification: Debug

daml.participant.api.execution.total¶

Summary: The overall time spent interpreting a Daml command.

Description: The time spent interpreting a Daml command in the ledger api server (includes executing Daml and fetching data).

Type: timer

Qualification: Debug

daml.participant.api.execution.total_running¶

Summary: The number of Daml commands currently being interpreted.

Description: The number of the commands that are currently being interpreted (includes executing Daml code and fetching data).

Type: counter

Qualification: Debug

daml.participant.api.index.active_contracts_buffer_size¶

Summary: The buffer size for active contracts requests.

Description: An Pekko stream buffer is added at the end of all streaming queries, allowing to absorb temporary downstream backpressure (e.g. when the client is slower than upstream delivery throughput). This metric gauges the size of the buffer for queries requesting active contracts that transactions satisfying a given predicate.

Type: counter

Qualification: Debug

daml.participant.api.index.completions_buffer_size¶

Summary: The buffer size for completions requests.

Description: An Pekko stream buffer is added at the end of all streaming queries, allowing to absorb temporary downstream backpressure (e.g. when the client is slower than upstream delivery throughput). This metric gauges the size of the buffer for queries requesting the completed commands in a specific period of time.

Type: counter

Qualification: Debug

daml.participant.api.index.db.active_contract_keys_lookup.batch.batch_size¶

Summary: The batch sizes in the lookup batch-loading Contract Service.

Description: The number of lookups contained in a batch, used in the batch-loading Contract Service.

Type: histogram

Qualification: Debug

daml.participant.api.index.db.active_contract_keys_lookup.batch.buffer_capacity¶

Summary: The capacity of the lookup queue.

Description: The maximum number of elements that can be kept in the queue of lookups in the batch-loading queue of the Contract Service.

Type: counter

Qualification: Debug

daml.participant.api.index.db.active_contract_keys_lookup.batch.buffer_delay¶

Summary: The queuing delay for the lookup queue.

Description: The queuing delay for the pending lookups in the batch-loading queue of the Contract Service.

Type: timer

Qualification: Debug

daml.participant.api.index.db.active_contract_keys_lookup.batch.buffer_length¶

Summary: The number of the currently pending lookups.

Description: The number of the currently pending lookups in the batch-loading queue of the Contract Service.

Type: counter

Qualification: Debug

daml.participant.api.index.db.active_contract_lookup.batch.batch_size¶

Summary: The batch sizes in the lookup batch-loading Contract Service.

Description: The number of lookups contained in a batch, used in the batch-loading Contract Service.

Type: histogram

Qualification: Debug

daml.participant.api.index.db.active_contract_lookup.batch.buffer_capacity¶

Summary: The capacity of the lookup queue.

Description: The maximum number of elements that can be kept in the queue of lookups in the batch-loading queue of the Contract Service.

Type: counter

Qualification: Debug

daml.participant.api.index.db.active_contract_lookup.batch.buffer_delay¶

Summary: The queuing delay for the lookup queue.

Description: The queuing delay for the pending lookups in the batch-loading queue of the Contract Service.

Type: timer

Qualification: Debug

daml.participant.api.index.db.active_contract_lookup.batch.buffer_length¶

Summary: The number of the currently pending lookups.

Description: The number of the currently pending lookups in the batch-loading queue of the Contract Service.

Type: counter

Qualification: Debug

daml.participant.api.index.db.flat_transactions_stream.translation¶

Summary: The time needed to turn serialized Daml-LF values into in-memory objects.

Description: Some index database queries that target contracts and transactions involve a Daml-LF translation step. For such queries this metric stands for the time it takes to turn the serialized Daml-LF values into in-memory representation.

Type: timer

Qualification: Debug

daml.participant.api.index.db.lookup_active_contract¶

Summary: The time spent fetching a contract using its id.

Description: This metric exposes the time spent fetching a contract using its id from the index db. It is then used by the Daml interpreter when evaluating a command into a transaction.

Type: timer

Qualification: Debug

daml.participant.api.index.db.lookup_key¶

Summary: The time spent looking up a contract using its key.

Description: This metric exposes the time spent looking up a contract using its key in the index db. It is then used by the Daml interpreter when evaluating a command into a transaction.

Type: timer

Qualification: Debug

daml.participant.api.index.db.tree_transactions_stream.translation¶

Summary: The time needed to turn serialized Daml-LF values into in-memory objects.

Description: Some index database queries that target contracts and transactions involve a Daml-LF translation step. For such queries this metric stands for the time it takes to turn the serialized Daml-LF values into in-memory representation.

Type: timer

Qualification: Debug

daml.participant.api.index.ledger_end_sequential_id¶

Summary: The sequential id of the current ledger end kept in memory.

Description: The ledger end’s sequential id is a monotonically increasing integer value representing the sequential id ascribed to the most recent ledger event ingested by the index db. Please note, that only a subset of all ledger events are ingested and given a sequential id. These are: creates, consuming exercises, non-consuming exercises and divulgence events. This value can be treated as a counter of all such events visible to a given participant. This metric exposes the latest ledger end’s sequential id registered in the in-memory data set.

Type: gauge

Qualification: Debug

daml.participant.api.index.transaction_trees_buffer_size¶

Summary: The buffer size for transaction trees requests.

Description: An Pekko stream buffer is added at the end of all streaming queries, allowing to absorb temporary downstream backpressure (e.g. when the client is slower than upstream delivery throughput). This metric gauges the size of the buffer for queries requesting transaction trees.

Type: counter

Qualification: Debug

daml.participant.api.index.updates_buffer_size¶

Summary: The buffer size for streaming updates requests.

Description: An Pekko stream buffer is added at the end of all streaming queries, allowing to absorb temporary downstream backpressure (e.g. when the client is slower than upstream delivery throughput). This metric gauges the size of the buffer for queries requesting updates in a specific period of time that satisfy a given predicate.

Type: counter

Qualification: Debug

daml.participant.api.indexer.deactivation_distances¶

Summary: Event sequence id distances between activations and deactivations.

Description: Histogram to collect the statistics of how long individual contracts lived.

Type: histogram

Qualification: Debug

daml.participant.api.indexer.events*¶

Summary: Number of ledger events processed.

Description: Represents the total number of ledger events processed (transactions, reassignments, party allocations).

Type: meter

Qualification: Debug

- Labels:

participant_id: The id of the participant.

user_id: The user generating the events.

event_type: The type of ledger event processed (transaction, reassignment, party_allocation).

status: Indicates if the event was accepted or not. Possible values accepted|rejected.

daml.participant.api.indexer.indexer_queue_blocked¶

Summary: The amount of blocked enqueue operations for the indexer queue.

Description: Indexer queue exerts backpressure by blocking asynchronous enqueue operations. This meter measures the amount of such blocked operations, signalling backpressure materializing from downstream.

Type: meter

Qualification: Debug

daml.participant.api.indexer.indexer_queue_buffered¶

Summary: The size of the buffer before the indexer.

Description: This buffer is located before the indexer, increasing amount signals backpressure mounting.

Type: meter

Qualification: Debug

daml.participant.api.indexer.indexer_queue_uncommitted¶

Summary: The amount of entries which are uncommitted for the indexer.

Description: Uncommitted entries contain all blocked, buffered and submitted, but not yet committed entries. This amount signals the momentum of stream processing, and has a theoretical maximum defined by all the queue perameters.

Type: meter

Qualification: Debug

daml.participant.api.indexer.ledger_end_sequential_id¶

Summary: The sequential id of the current ledger end kept in the database.

Description: The ledger end’s sequential id is a monotonically increasing integer value representing the sequential id ascribed to the most recent ledger event ingested by the index db. Please note, that only a subset of all ledger events are ingested and given a sequential id. These are: creates, consuming exercises, non-consuming exercises and divulgence events. This value can be treated as a counter of all such events visible to a given participant. This metric exposes the latest ledger end’s sequential id registered in the database.

Type: gauge

Qualification: Debug

daml.participant.api.indexer.metered_events*¶

Summary: Number of individual ledger events (create, exercise, archive).

Description: Represents the number of individual ledger events constituting a transaction.

Type: meter

Qualification: Debug

- Labels:

participant_id: The id of the participant.

user_id: The user generating the events.

daml.participant.api.indexer.output_batched_buffer_length¶

Summary: The size of the queue between the indexer and the in-memory state updating flow.

Description: This counter counts batches of updates passed to the in-memory flow. Batches are dynamically-sized based on amount of backpressure exerted by the downstream stages of the flow.

Type: counter

Qualification: Debug

daml.participant.api.indexer.updates¶

Summary: The number of the state updates persisted to the database.

Description: The number of the state updates persisted to the database. There are updates such as accepted transactions, configuration changes, party allocations, rejections, etc, but they also include synthetic events when the node learned about the sequencer clock advancing without any actual ledger event such as due to submission receipts or time proofs.

Type: counter

Qualification: Traffic

daml.participant.api.lapi.streams.acs_sent¶

Summary: The number of the active contracts sent by the ledger api.

Description: The total number of active contracts sent over the ledger api streams to all clients.

Type: counter

Qualification: Traffic

daml.participant.api.lapi.streams.completions_sent¶

Summary: The number of the command completions sent by the ledger api.

Description: The total number of completions sent over the ledger api streams to all clients.

Type: counter

Qualification: Traffic

daml.participant.api.lapi.streams.transaction_trees_sent¶

Summary: The number of the transaction trees sent over the ledger api.

Description: The total number of the transaction trees sent over the ledger api streams to all clients.

Type: counter

Qualification: Traffic

daml.participant.api.lapi.streams.updates_sent¶

Summary: The number of the flat updates sent over the ledger api.

Description: The total number of the flat updates sent over the ledger api streams to all clients.

Type: counter

Qualification: Traffic

daml.participant.api.services.current_ledger_end¶

Summary: The time to execute an index service operation.

Description: The index service is an internal component responsible for access to the index db data. Its operations are invoked whenever a client request received over the ledger api requires access to the index db. This metric captures time statistics of such operations.

Type: timer

Qualification: Debug

daml.participant.api.services.get_active_contracts¶

Summary: The time to execute an index service operation.

Description: The index service is an internal component responsible for access to the index db data. Its operations are invoked whenever a client request received over the ledger api requires access to the index db. This metric captures time statistics of such operations.

Type: timer

Qualification: Debug

daml.participant.api.services.get_completions¶

Summary: The time to execute an index service operation.

Description: The index service is an internal component responsible for access to the index db data. Its operations are invoked whenever a client request received over the ledger api requires access to the index db. This metric captures time statistics of such operations.

Type: timer

Qualification: Debug

daml.participant.api.services.get_events_by_contract_id¶

Summary: The time to execute an index service operation.

Description: The index service is an internal component responsible for access to the index db data. Its operations are invoked whenever a client request received over the ledger api requires access to the index db. This metric captures time statistics of such operations.

Type: timer

Qualification: Debug

daml.participant.api.services.get_lf_archive¶

Summary: The time to execute an index service operation.

Description: The index service is an internal component responsible for access to the index db data. Its operations are invoked whenever a client request received over the ledger api requires access to the index db. This metric captures time statistics of such operations.

Type: timer

Qualification: Debug

daml.participant.api.services.get_participant_id¶

Summary: The time to execute an index service operation.

Description: The index service is an internal component responsible for access to the index db data. Its operations are invoked whenever a client request received over the ledger api requires access to the index db. This metric captures time statistics of such operations.

Type: timer

Qualification: Debug

daml.participant.api.services.get_parties¶

Summary: The time to execute an index service operation.

Description: The index service is an internal component responsible for access to the index db data. Its operations are invoked whenever a client request received over the ledger api requires access to the index db. This metric captures time statistics of such operations.

Type: timer

Qualification: Debug

daml.participant.api.services.get_update_by_id¶

Summary: The time to execute an index service operation.

Description: The index service is an internal component responsible for access to the index db data. Its operations are invoked whenever a client request received over the ledger api requires access to the index db. This metric captures time statistics of such operations.

Type: timer

Qualification: Debug

daml.participant.api.services.get_update_by_offset¶

Summary: The time to execute an index service operation.

Description: The index service is an internal component responsible for access to the index db data. Its operations are invoked whenever a client request received over the ledger api requires access to the index db. This metric captures time statistics of such operations.

Type: timer

Qualification: Debug

daml.participant.api.services.index.in_memory_fan_out_buffer.prune¶

Summary: The time to remove all elements from the in-memory fan-out buffer.

Description: It is possible to remove the oldest entries of the in-memory fan out buffer. This metric exposes the time needed to prune the buffer.

Type: timer

Qualification: Debug

daml.participant.api.services.index.in_memory_fan_out_buffer.push¶

Summary: The time to add a new event into the buffer.

Description: The in-memory fan-out buffer is a buffer that stores the last ingested maxBufferSize accepted and rejected submission updates as TransactionLogUpdate. It allows bypassing IndexDB persistence fetches for recent updates for flat and transaction tree streams, command completion streams and by-event-id and by-transaction-id flat and transaction tree lookups. This metric exposes the time spent on adding a new event into the buffer.

Type: timer

Qualification: Debug

daml.participant.api.services.index.in_memory_fan_out_buffer.size¶

Summary: The size of the in-memory fan-out buffer.

Description: The actual size of the in-memory fan-out buffer. This metric is mostly targeted for debugging purposes.

Type: histogram

Qualification: Saturation

daml.participant.api.services.index.write.allocate_party¶

Summary: The time to execute a write service operation.

Description: The write service is an internal interface for changing the state through the synchronization services. The methods in this interface are all methods that are supported uniformly across all ledger implementations. This metric exposes the time needed to execute each operation.

Type: timer

Qualification: Debug

daml.participant.api.services.index.write.prune¶

Summary: The time to execute a write service operation.

Description: The write service is an internal interface for changing the state through the synchronization services. The methods in this interface are all methods that are supported uniformly across all ledger implementations. This metric exposes the time needed to execute each operation.

Type: timer

Qualification: Debug

daml.participant.api.services.index.write.submit_reassignment¶

Summary: The time to execute a write service operation.

Description: The write service is an internal interface for changing the state through the synchronization services. The methods in this interface are all methods that are supported uniformly across all ledger implementations. This metric exposes the time needed to execute each operation.

Type: timer

Qualification: Debug

daml.participant.api.services.index.write.submit_reassignment_running¶

Summary: The time to execute a write service operation.

Description: The write service is an internal interface for changing the state through the synchronization services. The methods in this interface are all methods that are supported uniformly across all ledger implementations. This metric exposes the time needed to execute each operation.

Type: counter

Qualification: Debug

daml.participant.api.services.index.write.submit_transaction¶

Summary: The time to execute a write service operation.

Description: The write service is an internal interface for changing the state through the synchronization services. The methods in this interface are all methods that are supported uniformly across all ledger implementations. This metric exposes the time needed to execute each operation.

Type: timer

Qualification: Debug

daml.participant.api.services.index.write.submit_transaction_running¶

Summary: The time to execute a write service operation.

Description: The write service is an internal interface for changing the state through the synchronization services. The methods in this interface are all methods that are supported uniformly across all ledger implementations. This metric exposes the time needed to execute each operation.

Type: counter

Qualification: Debug

daml.participant.api.services.index.write.update_vetted_packages¶

Summary: The time to execute a write service operation.

Description: The write service is an internal interface for changing the state through the synchronization services. The methods in this interface are all methods that are supported uniformly across all ledger implementations. This metric exposes the time needed to execute each operation.

Type: timer

Qualification: Debug

daml.participant.api.services.index.write.upload_packages¶

Summary: The time to execute a write service operation.

Description: The write service is an internal interface for changing the state through the synchronization services. The methods in this interface are all methods that are supported uniformly across all ledger implementations. This metric exposes the time needed to execute each operation.

Type: timer

Qualification: Debug

daml.participant.api.services.latest_pruned_offsets¶

Summary: The time to execute an index service operation.

Description: The index service is an internal component responsible for access to the index db data. Its operations are invoked whenever a client request received over the ledger api requires access to the index db. This metric captures time statistics of such operations.

Type: timer

Qualification: Debug

daml.participant.api.services.list_known_parties¶

Summary: The time to execute an index service operation.

Description: The index service is an internal component responsible for access to the index db data. Its operations are invoked whenever a client request received over the ledger api requires access to the index db. This metric captures time statistics of such operations.

Type: timer

Qualification: Debug

daml.participant.api.services.list_lf_packages¶

Summary: The time to execute an index service operation.

Description: The index service is an internal component responsible for access to the index db data. Its operations are invoked whenever a client request received over the ledger api requires access to the index db. This metric captures time statistics of such operations.

Type: timer

Qualification: Debug

daml.participant.api.services.lookup_active_contract¶

Summary: The time to execute an index service operation.

Description: The index service is an internal component responsible for access to the index db data. Its operations are invoked whenever a client request received over the ledger api requires access to the index db. This metric captures time statistics of such operations.

Type: timer

Qualification: Debug

daml.participant.api.services.lookup_configuration¶

Summary: The time to execute an index service operation.

Description: The index service is an internal component responsible for access to the index db data. Its operations are invoked whenever a client request received over the ledger api requires access to the index db. This metric captures time statistics of such operations.

Type: timer

Qualification: Debug

daml.participant.api.services.lookup_contract_key¶

Summary: The time to execute an index service operation.

Description: The index service is an internal component responsible for access to the index db data. Its operations are invoked whenever a client request received over the ledger api requires access to the index db. This metric captures time statistics of such operations.

Type: timer

Qualification: Debug

daml.participant.api.services.lookup_contract_state¶

Summary: The time to execute an index service operation.

Description: The index service is an internal component responsible for access to the index db data. Its operations are invoked whenever a client request received over the ledger api requires access to the index db. This metric captures time statistics of such operations.

Type: timer

Qualification: Debug

daml.participant.api.services.lookup_maximum_ledger_time¶

Summary: The time to execute an index service operation.

Description: The index service is an internal component responsible for access to the index db data. Its operations are invoked whenever a client request received over the ledger api requires access to the index db. This metric captures time statistics of such operations.

Type: timer

Qualification: Debug

daml.participant.api.services.party_entries¶

Summary: The time to execute an index service operation.

Description: The index service is an internal component responsible for access to the index db data. Its operations are invoked whenever a client request received over the ledger api requires access to the index db. This metric captures time statistics of such operations.

Type: timer

Qualification: Debug

daml.participant.api.services.prune¶

Summary: The time to execute an index service operation.

Description: The index service is an internal component responsible for access to the index db data. Its operations are invoked whenever a client request received over the ledger api requires access to the index db. This metric captures time statistics of such operations.

Type: timer

Qualification: Debug

daml.participant.api.services.pruning.prune.completed¶

Summary: Total number of completed pruning processes.

Description:

Type: meter

Qualification: Debug

daml.participant.api.services.pruning.prune.started¶

Summary: Total number of started pruning processes.

Description:

Type: meter

Qualification: Debug

daml.participant.api.services.read.compute_highest_ranked_synchronizer_from_admissible¶

Summary: The time to execute a read service operation.

Description: The read service is an internal interface for reading the events from the synchronization interfaces. The metrics expose the time needed to execute each operation.

Type: timer

Qualification: Debug

daml.participant.api.services.read.compute_party_vetting_map¶

Summary: The time to execute a read service operation.

Description: The read service is an internal interface for reading the events from the synchronization interfaces. The metrics expose the time needed to execute each operation.

Type: timer

Qualification: Debug

daml.participant.api.services.read.get_connected_synchronizers¶

Summary: The time to execute a read service operation.

Description: The read service is an internal interface for reading the events from the synchronization interfaces. The metrics expose the time needed to execute each operation.

Type: timer

Qualification: Debug

daml.participant.api.services.read.get_lf_archive¶

Summary: The time to execute a read service operation.

Description: The read service is an internal interface for reading the events from the synchronization interfaces. The metrics expose the time needed to execute each operation.

Type: timer

Qualification: Debug

daml.participant.api.services.read.incomplete_reassignment_offsets¶

Summary: The time to execute a read service operation.

Description: The read service is an internal interface for reading the events from the synchronization interfaces. The metrics expose the time needed to execute each operation.

Type: timer

Qualification: Debug

daml.participant.api.services.read.list_lf_packages¶

Summary: The time to execute a read service operation.

Description: The read service is an internal interface for reading the events from the synchronization interfaces. The metrics expose the time needed to execute each operation.

Type: timer

Qualification: Debug

daml.participant.api.services.read.list_vetted_packages¶

Summary: The time to execute a read service operation.

Description: The read service is an internal interface for reading the events from the synchronization interfaces. The metrics expose the time needed to execute each operation.

Type: timer

Qualification: Debug

daml.participant.api.services.read.select_routing_synchronizer¶

Summary: The time to execute a read service operation.

Description: The read service is an internal interface for reading the events from the synchronization interfaces. The metrics expose the time needed to execute each operation.

Type: timer

Qualification: Debug

daml.participant.api.services.read.state_updates¶

Summary: The time to execute a read service operation.

Description: The read service is an internal interface for reading the events from the synchronization interfaces. The metrics expose the time needed to execute each operation.

Type: timer

Qualification: Debug

daml.participant.api.services.read.validate_dar¶

Summary: The time to execute a read service operation.

Description: The read service is an internal interface for reading the events from the synchronization interfaces. The metrics expose the time needed to execute each operation.

Type: timer

Qualification: Debug

daml.participant.api.services.transaction_trees¶

Summary: The time to execute an index service operation.

Description: The index service is an internal component responsible for access to the index db data. Its operations are invoked whenever a client request received over the ledger api requires access to the index db. This metric captures time statistics of such operations.

Type: timer

Qualification: Debug

daml.participant.api.services.transactions¶

Summary: The time to execute an index service operation.

Description: The index service is an internal component responsible for access to the index db data. Its operations are invoked whenever a client request received over the ledger api requires access to the index db. This metric captures time statistics of such operations.

Type: timer

Qualification: Debug

daml.participant.console.tx-node-count¶

Summary: Number of nodes per transaction histogram, measured using canton console ledger_api.updates.start_measure

Description:

Type: histogram

Qualification: Debug

daml.participant.console.tx-nodes-emitted¶

Summary: Total number of nodes emitted, measured using canton console ledger_api.updates.start_measure

Description:

Type: meter

Qualification: Debug

daml.participant.console.tx-size¶

Summary: Transaction size histogram, measured using canton console ledger_api.updates.start_measure

Description:

Type: histogram

Qualification: Debug

daml.participant.declarative_api.errors¶

Summary: Errors for the last update

Description: The node will attempt to apply the changes configured in the declarative config file. A positive number means that some items failed to be synchronised. A negative number means that the overall synchronisation procedure failed with an error. : 0 = everything good, -1 = config file unreadable, -2 = context could not be created, -3 = failure while applying items, -9 = exception caught.

Type: gauge

Qualification: Errors

daml.participant.declarative_api.items¶

Summary: Number of items managed through the declarative API

Description: This metric indicates the number of items managed through the declarative API

Type: gauge

Qualification: Debug

daml.participant.inflight_validation_requests*¶

Summary: Number of requests being validated.

Description: Number of requests that are currently being validated. This also covers requests submitted by other participants.

Type: gauge

Qualification: Saturation

- Labels:

participant: The id of the participant for which the value applies.

daml.participant.sync.commitments.catchup-mode-enabled¶

Summary: Measures how many times the commitment processor catch-up mode has been triggered.

Description: Participant nodes compute bilateral commitments at regular intervals. This metric exposes how often the catch-up mode has been activated. The catch-up mode is triggered according to catch-up config and happens if the participant lags behind on computation. A healthy value is 0. An increasing value indicates intermittent periods when a participant alternates between healthy and struggling to keep up with commitment computation. However, we do not see a constantly increasing value for a participant that is consistently behind commitment computation because, once catch-up mode is activated, the participant remains in catch-up mode until it has completely caught up, and only triggers the metric once. In order to troubleshoot non-zero values, the operator should cross-correlate this value with the daml.participant.sync.commitments.compute metric.

Type: meter

Qualification: Debug

daml.participant.sync.commitments.compute¶

Summary: Measures the time that the participant node spends computing commitments.

Description: Participant nodes compute bilateral commitments at regular intervals, i.e., reconciliation intervals. This metric exposes the time spent on each computation in milliseconds. There are two cases that the operator should pay attention to. First, fluctuations in this value are expected if the number of counter-participants or common stakeholder groups changes. However, changes with no apparent reason could indicate a bug and the operator should monitor closely. Second, it is a cause of concern if the value starts approaching or is greater than the reconciliation interval: The participant will perpetually lag behind, because it needs to compute commitments more frequently than it can manage. The operator should consider asking the synchronizer operator to increase the reconciliation interval if the increase in commitment computation is expected, or otherwise investigate the cause.

Type: timer

Qualification: Debug

daml.participant.sync.commitments.last-incoming-processed¶

Summary: Timestamp of the latest processed incoming ACS commitment period end in microseconds since unix epoch

Description: Timestamp of the latest incoming ACS commitment period end that was fully processed by the participant.

Type: gauge

Qualification: Latency

daml.participant.sync.commitments.last-incoming-received¶

Summary: Timestamp of the latest received incoming ACS commitment period end in microseconds since unix epoch

Description: Timestamp of the latest incoming ACS commitment period end that has been received and enqueued, but not yet processed by the participant. To measure the latency of particular counter participants, use one of the counter-participant-latency metrics.

Type: gauge

Qualification: Latency

daml.participant.sync.commitments.last-locally-checkpointed¶

Summary: Record time of the latest checkpointed ACS commitment in microseconds since unix epoch

Description: Timestamp of the latest checkpointed ACS commitment in microseconds. Crash recovery will start reingesting from this timestamp on or from the latest locally completed ACS commitment interval on, whichever is later.

Type: gauge

Qualification: Latency

daml.participant.sync.commitments.last-locally-completed¶

Summary: Timestamp of the latest locally completed ACS commitment interval in microseconds since unix epoch

Description: Timestamp of the latest locally completed ACS commitment interval. Crash recovery will start reingesting from this timestamp on or from the latest checkpointed ACS commitment interval on, whichever is later.

Type: gauge

Qualification: Latency

daml.participant.sync.commitments.sequencing-time¶

Summary: Measures the time between the end of a commitment period, and the time when the sequencer observes the corresponding commitment.

Description: Participant nodes compute bilateral commitments at regular intervals. After a participant computes a commitment, it sends it for sequencing. The time between the end of a commitment interval and sequencing is measured in milliseconds. Because commitment computation is comprised within the measured time, the value is always greater than the daml.participant.sync.commitments.compute metric. The operator should pay attention to fluctuations of this value. An increase can be expected, e.g., because the computation time increases. However, a value increase can be a cause of concern, because it can indicate that the participant is lagging behind in processing messages and computing commitments, which is accompanied by ACS_COMMITMENT_DEGRADATION warnings in the participant logs. An increase can also indicate that the sequencer is slow in sequencing the commitment messages. The operator should cross-correlate with sequencing metrics such as daml.sequencer-client.submissions.sequencing and daml.sequencer-client.handler.delay. In this case, the operator should consider changing the preferred sequencer configuration.

Type: gauge

Qualification: Debug

daml.participant.sync.commitments.synchronizer.largest-counter-participant-latency¶

Summary: The highest latency in micros for commitments outstanding from counter-participants for more than a threshold-number of reconciliation intervals.

Description: Participant nodes compute bilateral commitments at regular intervals and send them. This metric is the default indicator of a counter-participant being slow.The metric exposes the highest latency of a counter-participant, measured by subtracting the highest known counter-participant latency from the most recent period processed by the participant. A counter-participant has to send a commitment at least once in order to appear here. The operator of a participant can configure a default threshold per synchronizer that the participant connects to. The smaller the threshold, the more sensitive the metric is to even small delays in receiving commitments from counter-participants. For example, for a threshold of 5 intervals and a reconciliation interval of 1 minute, the metric measures the latency of counter-participants that have sent no commitments for periods covering the last 5 minutes observed by the participant.

Type: gauge

Qualification: Debug

daml.participant.sync.commitments.synchronizer.largest-distinguished-counter-participant-latency¶

Summary: The highest latency in micros for commitments outstanding from distinguished counter-participants for more than a threshold-number of reconciliation intervals.

Description: Participant nodes compute bilateral commitments at regular intervals and send them. This metric indicates that a distinguished counter-participant is slow, i.e., the participant cannot confirm that its state is the same with that of a counter-participant with whom the operator has an important business relation.The metric exposes the highest latency of a counter-participant, measured by subtracting the highest known counter-participant latency from the most recent period processed by the participant. A counter-participant has to send a commitment at least once in order to appear here. The operator of a participant can configure a default threshold per synchronizer that the participant connects to. The smaller the threshold, the more sensitive the metric is to even small delays in receiving commitments from counter-participants. For example, for a threshold of 5 intervals and a reconciliation interval of 1 minute, the metric measures the latency of counter-participants that have sent no commitments for periods covering the last 5 minutes observed by the participant.

Type: gauge

Qualification: Debug

daml.participant.sync.conflict-detection.sequencer-counter-queue¶

Summary: Size of conflict detection sequencer counter queue

Description: The task scheduler will work off tasks according to the timestamp order, scheduling the tasks whenever a new timestamp has been observed. This metric exposes the number of un-processed sequencer messages that will trigger a timestamp advancement.

Type: counter

Qualification: Debug

daml.participant.sync.in-flight-submission-synchronizer-tracker.unsequenced-in-flight-submissions¶

Summary: Number of unsequenced submissions in-flight.

Description: Number of unsequenced submissions in-flight. Unsequenced in-flight submissions are tracked in-memory, so high amount here will boil down to memory pressure.

Type: gauge

Qualification: Saturation

daml.participant.sync.inflight-validations¶

Summary: Number of requests being validated on the synchronizer.

Description: Number of requests that are currently being validated on the synchronizer. This also covers requests submitted by other participants.

Type: counter

Qualification: Saturation

daml.participant.sync.protocol-messages.confirmation-request-creation¶

Summary: Time to create a transaction confirmation request

Description: The time that the transaction protocol processor needs to create a transaction confirmation request.

Type: timer

Qualification: Latency

daml.participant.sync.protocol-messages.confirmation-request-size¶

Summary: Confirmation request size

Description: Records the histogram of the sizes of (transaction) confirmation requests.

Type: histogram

Qualification: Debug

daml.participant.sync.protocol-messages.transaction-message-receipt¶

Summary: Time to parse and decrypt a transaction message

Description: The time that the transaction protocol processor needs to parse and decrypt an incoming confirmation request.

Type: timer

Qualification: Debug

daml.participant.sync.request-tracker.sequencer-counter-queue¶

Summary: Size of record order publisher sequencer counter queue

Description: Same as for conflict-detection, but measuring the sequencer counter queues for the publishing to the ledger api server according to record time.

Type: counter

Qualification: Debug

daml.pruning¶

Summary: Duration of prune operations.

Description: This timer exposes the duration of pruning requests from the Canton portion of the ledger.

Type: timer

Qualification: Saturation

daml.pruning.max-event-age¶

Summary: Age of oldest unpruned event.

Description: This gauge exposes the age of the oldest, unpruned event in hours as a way to quantify the pruning backlog.

Type: gauge

Qualification: Saturation

daml.sequencer-client.handler.actual-in-flight-event-batches¶

Summary: Nodes process the events from the synchronizer’s sequencer in batches. This metric tracks how many such batches are processed in parallel.

Description: Incoming messages are processed by a sequencer client, which combines them into batches of size up to ‘event-inbox-size’ before sending them to an application handler for processing. Depending on the system’s configuration, the rate at which event batches are sent to the handler may be throttled to avoid overwhelming it with too many events at once. Indicators that the configured upper bound may be too low: This metric constantly is close to the configured maximum, which is exposed via ‘max-in-flight-event-batches’, while the system’s resources are under-utilized. Indicators that the configured upper bound may be too high: Out-of-memory errors crashing the JVM or frequent garbage collection cycles that slow down processing. The metric tracks how many of these batches have been sent to the application handler but have not yet been fully processed. This metric can help identify potential bottlenecks or issues with the application’s processing of events and provide insights into the overall workload of the system.

Type: counter

Qualification: Saturation

daml.sequencer-client.handler.application-handle¶

Summary: Timer monitoring time and rate of sequentially handling the event application logic

Description: All events are received sequentially. This handler records the rate and time it takes the application (participant or mediator) to handle the events.

Type: timer

Qualification: Debug

daml.sequencer-client.handler.delay¶

Summary: The delay on the event processing in milliseconds

Description: Every message received from the sequencer carries a timestamp that was assigned by the sequencer when it sequenced the message. This timestamp is called the sequencing timestamp. The component receiving the message on the participant or mediator is the sequencer client, while on the block sequencer itself, it’s the block update generator. Upon having received the same message from enough sequencers (as configured by the trust threshold), the sequencer client compares the time difference between the sequencing time and the computers local clock and exposes this difference as the given metric. The difference will include the clock-skew and the processing latency between assigning the timestamp on the sequencer and receiving the message by the recipient from enough sequencers. If the difference is large compared to the usual latencies, clock skew can be ruled out, and enough sequencers are not slow, then it means that the node is still trying to catch up with events that the sequencers sequenced a while ago. This can happen after having been offline for a while or if the node is too slow to keep up with the messaging load.

Type: gauge

Qualification: Debug

daml.sequencer-client.handler.last-sequencing-time-micros¶

Summary: The sequencing time of the last processed event in microseconds since unix epoch

Description: Every message received from the sequencer carries a timestamp that was assigned by the sequencer when it sequenced the message. This timestamp is called the sequencing timestamp. The component receiving the message on the participant or mediator is the sequencer client, while on the block sequencer itself, it’s the block update generator. Upon having received the same message from enough sequencers (as configured by the trust threshold), this metric is updated with the sequencing time of that message.

Type: gauge

Qualification: Debug

daml.sequencer-client.handler.max-in-flight-event-batches¶

Summary: Nodes process the events from the synchronizer’s sequencer in batches. This metric tracks the upper bound of such batches being processed in parallel.

Description: Incoming messages are processed by a sequencer client, which combines them into batches of size up to ‘event-inbox-size’ before sending them to an application handler for processing. Depending on the system’s configuration, the rate at which event batches are sent to the handler may be throttled to avoid overwhelming it with too many events at once. Configured by ‘maximum-in-flight-event-batches’ parameter in the sequencer-client config The metric shows the configured upper limit on how many batches the application handler may process concurrently. The metric ‘actual-in-flight-event-batches’ tracks the actual number of currently processed batches.

Type: gauge

Qualification: Debug

daml.sequencer-client.handler.sequencer-events¶

Summary: Number of received events from the sequencer

Description: A participant reads events from the sequencer. This metric captures the count and rate of events.

Type: counter

Qualification: Debug

daml.sequencer-client.sequencer-connection-pool.active-subscriptions¶

Summary: Number of active subscriptions in the subscription pool

Description: This metric indicates the current number of subscriptions that are active.

Type: gauge

Qualification: Saturation

daml.sequencer-client.sequencer-connection-pool.grpc-requests¶

Summary: Number of gRPC requests sent on this connection

Description: This metric indicates the number of gRPC requests that have been sent on this connection.

Type: counter

Qualification: Saturation

daml.sequencer-client.sequencer-connection-pool.subscription-threshold¶

Summary: Sum of trust threshold and liveness margin configured in the subscription pool

Description: The liveness margin determines how many subscriptions on different sequencers are continuously maintained, beyond the minimum number defined by the trust threshold. In other words, the subscription pool will strive to maintain at all times (trust threshold + liveness margin)-many subscriptions active. This provides tolerance to subscriptions falling, enabling the node to continue operating while some sequencers are down.

Type: gauge

Qualification: Saturation

daml.sequencer-client.sequencer-connection-pool.tracked-connections¶

Summary: Number of connections tracked by the connection pool

Description: The configuration of the connection pool defines the parameters of the sequencer connections. This metrics shows the current number of those connections.

Type: gauge

Qualification: Saturation

daml.sequencer-client.sequencer-connection-pool.trust-threshold¶

Summary: Trust threshold configured in the connection pool

Description: The trust threshold determines how many connections to sequencers must be available and consistent (same synchronizer ID, same protocol version, same static parameters) for the connection pool to initialize. Furthermore, it also determines the number of sequencer subscriptions that must deliver identical copies of an event for that event to be accepted and processed by the node.

Type: gauge

Qualification: Saturation

daml.sequencer-client.sequencer-connection-pool.validated-connections¶

Summary: Number of connections validated by the connection pool

Description: This metric indicates the current number of connections that are up and validated. These connections are available for components of the node that need to communicate with the synchronizer.

Type: gauge

Qualification: Saturation

daml.sequencer-client.submissions.amplification¶

Summary: Rate and timings of submission request attempts to a sequencer

Description: This timer is started when a submission request attempt is sent to the sequencer, and completed when it is observed as sequenced. If the attempt is not observed as sequenced before the amplification patience expires, no timing will be recorded for this and the following attempts.

Type: timer

Qualification: Latency

daml.sequencer-client.submissions.amplified-attempts¶

Summary: Count of send request attempts which are amplified

Description: Counter that is incremented if a send request attempt, which did not receive a synchronous error from the sequencer, is not observed as sequenced until the amplification patience expires and a new attempt is sent.

Type: meter

Qualification: Errors

daml.sequencer-client.submissions.attempt-sync-errors¶

Summary: Count of send request attempts which receive a synchronous error